Even in a world increasingly battered by weather extremes, the summer 2021 heat wave in the Pacific Northwest stood out. For several days in late June, cities such as Vancouver, Portland and Seattle baked in record temperatures that killed hundreds of people. On June 29, Lytton, a village in British Columbia, set an all-time heat record for Canada, at 121° Fahrenheit (49.6° Celsius); the next day, the village was incinerated by a wildfire.

Within a week, an international group of scientists had analyzed this extreme heat and concluded it would have been virtually impossible without climate change caused by humans. The planet’s average surface temperature has risen by at least 1.1 degrees Celsius since preindustrial levels of 1850–1900. The reason: People are loading the atmosphere with heat-trapping gases produced during the burning of fossil fuels, such as coal and gas, and from cutting down forests.

A little over 1 degree of warming may not sound like a lot. But it has already been enough to fundamentally transform how energy flows around the planet. The pace of change is accelerating, and the consequences are everywhere. Ice sheets in Greenland and Antarctica are melting, raising sea levels and flooding low-lying island nations and coastal cities. Drought is parching farmlands and the rivers that feed them. Wildfires are raging. Rains are becoming more intense, and weather patterns are shifting.

The roots of understanding this climate emergency trace back more than a century and a half. But it wasn’t until the 1950s that scientists began the detailed measurements of atmospheric carbon dioxide that would prove how much carbon is pouring from human activities. Beginning in the 1960s, researchers started developing comprehensive computer models that now illuminate the severity of the changes ahead.

Today we know that climate change and its consequences are real, and we are responsible. The emissions that people have been putting into the air for centuries — the emissions that made long-distance travel, economic growth and our material lives possible — have put us squarely on a warming trajectory. Only drastic cuts in carbon emissions, backed by collective global will, can make a significant difference.

“What’s happening to the planet is not routine,” says Ralph Keeling, a geochemist at the Scripps Institution of Oceanography in La Jolla, Calif. “We’re in a planetary crisis.”

Cole Burston/AFP via Getty Images

Setting the stage

One day in the 1850s, Eunice Newton Foote, an amateur scientist and a women’s rights activist living in upstate New York, put two glass jars in sunlight. One contained regular air — a mix of nitrogen, oxygen and other gases including carbon dioxide — while the other contained just carbon dioxide. Both had thermometers in them. As the sun’s rays beat down, Foote observed that the jar of CO2 alone heated up more quickly, and was slower to cool down, than the one containing plain air.

The results prompted Foote to muse on the relationship between CO2, the planet and heat. “An atmosphere of that gas would give to our earth a high temperature,” she wrote in an 1856 paper summarizing her findings.

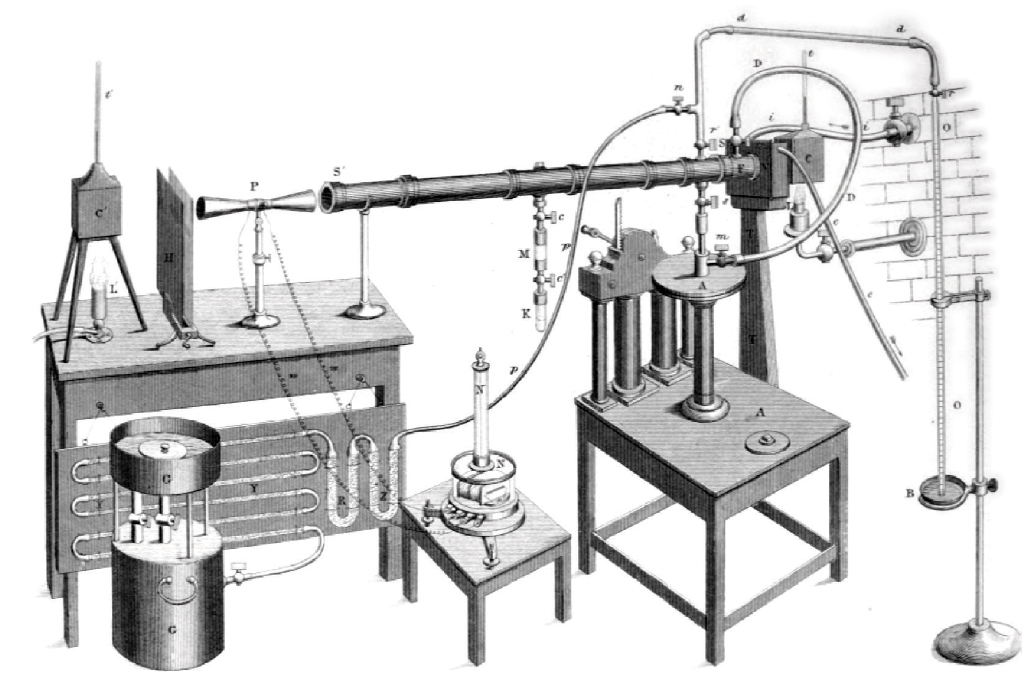

Three years later, working independently and apparently unaware of Foote’s discovery, Irish physicist John Tyndall showed the same basic idea in more detail. With a set of pipes and devices to study the transmission of heat, he found that CO2 gas, as well as water vapor, absorbed more heat than air alone. He argued that such gases would trap heat in Earth’s atmosphere, much as panes of glass trap heat in a greenhouse, and thus modulate climate.

Today Tyndall is widely credited with the discovery of how what we now call greenhouse gases heat the planet, earning him a prominent place in the history of climate science. Foote faded into relative obscurity — partly because of her gender, partly because her measurements were less sensitive. Yet their findings helped kick off broader scientific exploration of how the composition of gases in Earth’s atmosphere affects global temperatures.

Carbon floods in

Humans began substantially affecting the atmosphere around the turn of the 19th century, when the Industrial Revolution took off in Britain. Factories burned tons of coal; fueled by fossil fuels, the steam engine revolutionized transportation and other industries. Since then, fossil fuels including oil and natural gas have been harnessed to drive a global economy. All these activities belch gases into the air.

Yet Swedish physical chemist Svante Arrhenius wasn’t worried about the Industrial Revolution when he began thinking in the late 1800s about changes in atmospheric CO2 levels. He was instead curious about ice ages — including whether a decrease in volcanic eruptions, which can put carbon dioxide into the atmosphere, would lead to a future ice age. Bored and lonely in the wake of a divorce, Arrhenius set himself to months of laborious calculations involving moisture and heat transport in the atmosphere at different zones of latitude. In 1896, he reported that halving the amount of CO2 in the atmosphere could indeed bring about an ice age — and that doubling CO2 would raise global temperatures by around 5 to 6 degrees C.

It was a remarkably prescient finding for work that, out of necessity, had simplified Earth’s complex climate system down to just a few variables. But Arrhenius’ findings didn’t gain much traction with other scientists at the time. The climate system seemed too large, complex and inert to change in any meaningful way on a timescale that would be relevant to human society. Geologic evidence showed, for instance, that ice ages took thousands of years to start and end. What was there to worry about?

One researcher, though, thought the idea was worth pursuing. Guy Stewart Callendar, a British engineer and amateur meteorologist, had tallied weather records over time, obsessively enough to determine that average temperatures were increasing at 147 weather stations around the globe. In a 1938 paper in a Royal Meteorological Society journal, he linked this temperature rise to the burning of fossil fuels. Callendar estimated that fossil fuel burning had put around 150 billion metric tons of CO2 into the atmosphere since the late 19th century.

Like many of his day, Callendar didn’t see global warming as a problem. Extra CO2 would surely stimulate plants to grow and allow crops to be farmed in new regions. “In any case the return of the deadly glaciers should be delayed indefinitely,” he wrote. But his work revived discussions tracing back to Tyndall and Arrhenius about how the planetary system responds to changing levels of gases in the atmosphere. And it began steering the conversation toward how human activities might drive those changes.

When World War II broke out the following year, the global conflict redrew the landscape for scientific research. Hugely important wartime technologies, such as radar and the atomic bomb, set the stage for “big science” studies that brought nations together to tackle high-stakes questions of global reach. And that allowed modern climate science to emerge.

The Keeling curve

One major effort was the International Geophysical Year, or IGY, an 18-month push in 1957–1958 that involved a wide array of scientific field campaigns including exploration in the Arctic and Antarctica. Climate change wasn’t a high research priority during the IGY, but some scientists in California, led by Roger Revelle of the Scripps Institution of Oceanography, used the funding influx to begin a project they’d long wanted to do. The goal was to measure CO2 levels at different locations around the world, accurately and consistently.

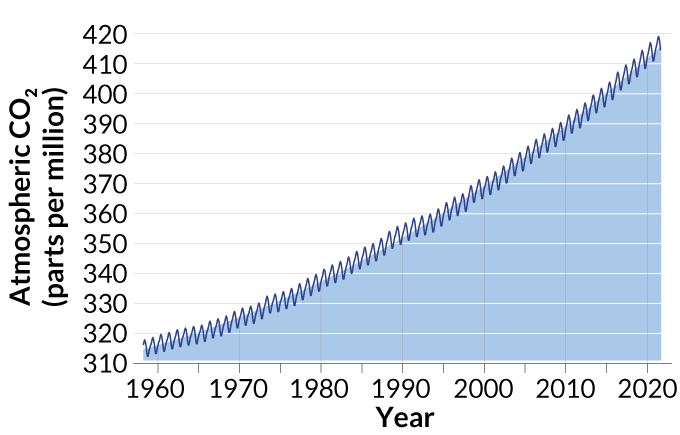

The job fell to geochemist Charles David Keeling, who put ultraprecise CO2 monitors in Antarctica and on the Hawaiian volcano of Mauna Loa. Funds soon ran out to maintain the Antarctic record, but the Mauna Loa measurements continued. Thus was born one of the most iconic datasets in all of science — the “Keeling curve,” which tracks the rise of atmospheric CO2.

When Keeling began his measurements in 1958, CO2 made up 315 parts per million of the global atmosphere. Within just a few years it became clear that the number was increasing year by year. Because plants take up CO2 as they grow in spring and summer and release it as they decompose in fall and winter, CO2 concentrations rose and fell each year in a sawtooth pattern. But superimposed on that pattern was a steady march upward.

“The graph got flashed all over the place — it was just such a striking image,” says Ralph Keeling, who is Keeling’s son. Over the years, as the curve marched higher, “it had a really important role historically in waking people up to the problem of climate change.” The Keeling curve has been featured in countless earth science textbooks, congressional hearings and in Al Gore’s 2006 documentary on climate change, An Inconvenient Truth.

Each year the curve keeps going up: In 2016, it passed 400 ppm of CO2 in the atmosphere as measured during its typical annual minimum in September. Today it is at 413 ppm. (Before the Industrial Revolution, CO2 levels in the atmosphere had been stable for centuries at around 280 ppm.)

Around the time that Keeling’s measurements were kicking off, Revelle also helped develop an important argument that the CO2 from human activities was building up in Earth’s atmosphere. In 1957, he and Hans Suess, also at Scripps at the time, published a paper that traced the flow of radioactive carbon through the oceans and the atmosphere. They showed that the oceans were not capable of taking up as much CO2 as previously thought; the implication was that much of the gas must be going into the atmosphere instead.

“Human beings are now carrying out a large-scale geophysical experiment of a kind that could not have happened in the past nor be reproduced in the future,” Revelle and Suess wrote in the paper. It’s one of the most famous sentences in earth science history.

Here was the insight underlying modern climate science: Atmospheric carbon dioxide is increasing, and humans are causing the buildup. Revelle and Suess became the final piece in a puzzle dating back to Svante Arrhenius and John Tyndall. “I tell my students that to understand the basics of climate change, you need to have the cutting-edge science of the 1860s, the cutting-edge math of the 1890s and the cutting-edge chemistry of the 1950s,” says Joshua Howe, an environmental historian at Reed College in Portland, Ore.

Evidence piles up

Observational data collected throughout the second half of the 20th century helped researchers gradually build their understanding of how human activities were transforming the planet.

Ice cores pulled from ice sheets, such as that atop Greenland, offer some of the most telling insights for understanding past climate change. Each year, snow falls atop the ice and compresses into a fresh layer of ice representing climate conditions at the time it formed. The abundance of certain forms, or isotopes, of oxygen and hydrogen in the ice allows scientists to calculate the temperature at which it formed, and air bubbles trapped within the ice reveal how much carbon dioxide and other greenhouse gases were in the atmosphere at that time. So drilling down into an ice sheet is like reading the pages of a history book that go back in time the deeper you go.

Scientists began reading these pages in the early 1960s, using ice cores drilled at a U.S. military base in northwest Greenland. Contrary to expectations that past climates were stable, the cores hinted that abrupt climate shifts had happened over the last 100,000 years. By 1979, an international group of researchers was pulling another deep ice core from a second location in Greenland — and it, too, showed that abrupt climate change had occurred in the past. In the late 1980s and early 1990s, a pair of European- and U.S.-led drilling projects retrieved even deeper cores from near the top of the ice sheet, pushing the record of past temperatures back a quarter of a million years.

Together with other sources of information, such as sediment cores drilled from the seafloor and molecules preserved in ancient rocks, the ice cores allowed scientists to reconstruct past temperature changes in extraordinary detail. Many of those changes happened alarmingly fast. For instance, the climate in Greenland warmed abruptly more than 20 times in the last 80,000 years, with the changes occurring in a matter of decades. More recently, a cold spell that set in around 13,000 years ago suddenly came to an end around 11,500 years ago — and temperatures in Greenland rose 10 degrees C in a decade.

Evidence for such dramatic climate shifts laid to rest any lingering ideas that global climate change would be slow and unlikely to occur on a timescale that humans should worry about. “It’s an important reminder of how ‘tippy’ things can be,” says Jessica Tierney, a paleoclimatologist at the University of Arizona in Tucson.

More evidence of global change came from Earth-observing satellites, which brought a new planet-wide perspective on global warming beginning in the 1960s. From their viewpoint in the sky, satellites have measured the rise in global sea level — currently 3.4 millimeters per year and accelerating, as warming water expands and as ice sheets melt — as well as the rapid decline in ice left floating on the Arctic Ocean each summer at the end of the melt season. Gravity-sensing satellites have “weighed” the Antarctic and Greenlandic ice sheets from above since 2002, reporting that more than 400 billion metric tons of ice are lost each year.

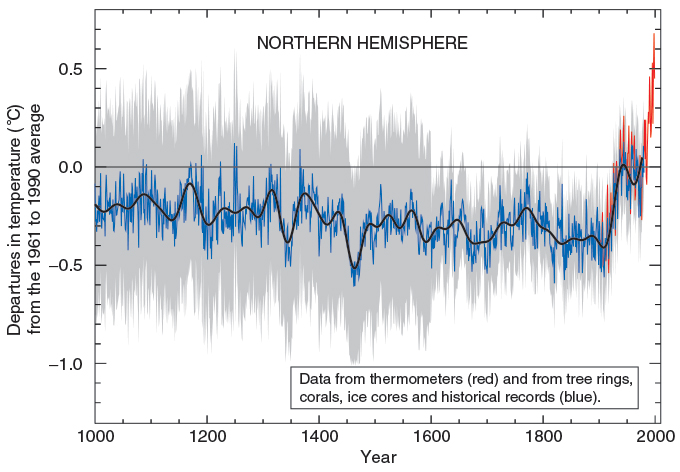

Temperature observations taken at weather stations around the world also confirm that we are living in the hottest years on record. The 10 warmest years since record keeping began in 1880 have all occurred since 2005. And nine of those 10 have come since 2010.

Worrisome predictions

By the 1960s, there was no denying that the planet was warming. But understanding the consequences of those changes — including the threat to human health and well-being — would require more than observational data. Looking to the future depended on computer simulations: complex calculations of how energy flows through the planetary system.

A first step in building such climate models was to connect everyday observations of weather to the concept of forecasting future climate. During World War I, British mathematician Lewis Fry Richardson imagined tens of thousands of meteorologists, each calculating conditions for a small part of the atmosphere but collectively piecing together a global forecast.

But it wasn’t until after World War II that computational power turned Richardson’s dream into reality. In the wake of the Allied victory, which relied on accurate weather forecasts for everything from planning D-Day to figuring out when and where to drop the atomic bombs, leading U.S. mathematicians acquired funding from the federal government to improve predictions. In 1950, a team led by Jule Charney, a meteorologist at the Institute for Advanced Study in Princeton, N.J., used the ENIAC, the first U.S. programmable, electronic computer, to produce the first computer-driven regional weather forecast. The forecasting was slow and rudimentary, but it built on Richardson’s ideas of dividing the atmosphere into squares, or cells, and computing the weather for each of those. The work set the stage for decades of climate modeling to follow.

By 1956, Norman Phillips, a member of Charney’s team, had produced the world’s first general circulation model, which captured how energy flows between the oceans, atmosphere and land. The field of climate modeling was born.

The work was basic at first because early computers simply didn’t have much computational power to simulate all aspects of the planetary system.

An important breakthrough came in 1967, when meteorologists Syukuro Manabe and Richard Wetherald — both at the Geophysical Fluid Dynamics Laboratory in Princeton, a lab born from Charney’s group — published a paper in the Journal of the Atmospheric Sciences that modeled connections between Earth’s surface and atmosphere and calculated how changes in CO2 would affect the planet’s temperature. Manabe and Wetherald were the first to build a computer model that captured the relevant processes that drive climate, and to accurately simulate how the Earth responds to those processes.

The rise of climate modeling allowed scientists to more accurately envision the impacts of global warming. In 1979, Charney and other experts met in Woods Hole, Mass., to try to put together a scientific consensus on what increasing levels of CO2 would mean for the planet. The resulting “Charney report” concluded that rising CO2 in the atmosphere would lead to additional and significant climate change.

In the decades since, climate modeling has gotten increasingly sophisticated. And as climate science firmed up, climate change became a political issue.

Backlash

The rising public awareness of climate change, and battles over what to do about it, emerged alongside awareness of other environmental issues in the 1960s and ’70s. Rachel Carson’s 1962 book Silent Spring, which condemned the pesticide DDT for its ecological impacts, catalyzed environmental activism in the United States and led to the first Earth Day in 1970.

In 1974, scientists discovered another major global environmental threat — the Antarctic ozone hole, which had some important parallels to and differences from the climate change story. Chemists Mario Molina and F. Sherwood Rowland, of the University of California, Irvine, reported that chlorofluorocarbon chemicals, used in products such as spray cans and refrigerants, caused a chain of reactions that gnawed away at the atmosphere’s protective ozone layer. The resulting ozone hole, which forms over Antarctica every spring, allows more ultraviolet radiation from the sun to make it through Earth’s atmosphere and reach the surface, where it can cause skin cancer and eye damage.

Governments worked under the auspices of the United Nations to craft the 1987 Montreal Protocol, which strictly limited the manufacture of chlorofluorocarbons. In the years following, the ozone hole began to heal. But fighting climate change is proving to be far more challenging. Transforming entire energy sectors to reduce or eliminate carbon emissions is much more difficult than replacing a set of industrial chemicals.

In 1980, though, researchers took an important step toward banding together to synthesize the scientific understanding of climate change and bring it to the attention of international policy makers. It started at a small scientific conference in Villach, Austria, on the seriousness of climate change. On the train ride home from the meeting, Swedish meteorologist Bert Bolin talked with other participants about how a broader, deeper and more international analysis was needed. In 1988, a United Nations body called the Intergovernmental Panel on Climate Change, the IPCC, was born. Bolin was its first chairperson.

The IPCC became a highly influential and unique body. It performs no original scientific research; instead, it synthesizes and summarizes the vast literature of climate science for policy makers to consider — primarily through massive reports issued every couple of years. The first IPCC report, in 1990, predicted that the planet’s global mean temperature would rise more quickly in the following century than at any point in the last 10,000 years, due to increasing greenhouse gases in the atmosphere.

IPCC reports have played a key role in providing scientific information for nations discussing how to stabilize greenhouse gas concentrations. This process started with the Rio Earth Summit in 1992, which resulted in the U.N. Framework Convention on Climate Change. Annual U.N. meetings to tackle climate change led to the first international commitments to reduce emissions, the Kyoto Protocol of 1997. Under it, developed countries committed to reduce emissions of CO2 and other greenhouse gases. By 2007, the IPCC declared the reality of climate warming is “unequivocal.” The group received the Nobel Peace Prize that year, along with Al Gore, for their work on climate change.

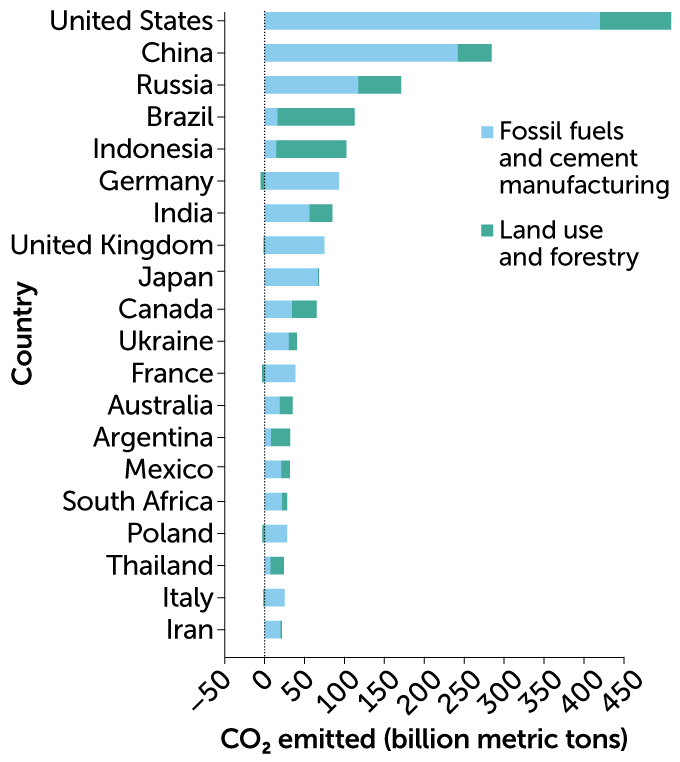

The IPCC process ensured that policy makers had the best science at hand when they came to the table to discuss cutting emissions. Of course, nations did not have to abide by that science — and they often didn’t. Throughout the 2000s and 2010s, international climate meetings discussed less hard-core science and more issues of equity. Countries such as China and India pointed out that they needed energy to develop their economies and that nations responsible for the bulk of emissions through history, such as the United States, needed to lead the way in cutting greenhouse gases.

Meanwhile, residents of some of the most vulnerable nations, such as low-lying islands that are threatened by sea level rise, gained visibility and clout at international negotiating forums. “The issues around equity have always been very uniquely challenging in this collective action problem,” says Rachel Cleetus, a climate policy expert with the Union of Concerned Scientists in Cambridge, Mass.

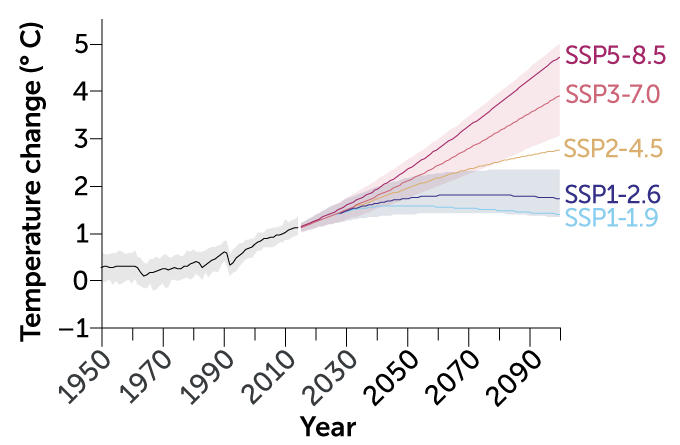

By 2015, the world’s nations had made some progress on the emissions cuts laid out in the Kyoto Protocol, but it was still not enough to achieve substantial global reductions. That year, a key U.N. climate conference in Paris produced an international agreement to try to limit global warming to 2 degrees C, and preferably 1.5 degrees C, above preindustrial levels.

Every country has its own approach to the challenge of addressing climate change. In the United States, which gets approximately 80 percent of its energy from fossil fuels, sophisticated efforts to downplay and critique the science led to major delays in climate action. For decades, U.S. fossil fuel companies such as ExxonMobil worked to influence politicians to take as little action on emissions reductions as possible.

Such tactics undoubtedly succeeded in feeding politicians’ delay on climate action in the United States, most of it from Republicans. President George W. Bush withdrew the country from the Kyoto Protocol in 2001; Donald Trump similarly rejected the Paris accord in 2017. As late as 2015, the chair of the Senate’s environment committee, James Inhofe of Oklahoma, brought a snowball into Congress on a cold winter’s day to argue that human-caused global warming is a “hoax.”

In Australia, a similar mix of right-wing denialism and fossil fuel interests has kept climate change commitments in flux, as prime ministers are voted in and out over fierce debates about how the nation should act on climate.

Yet other nations have moved forward. Some European countries such as Germany aggressively pursued renewable energies, including wind and solar, while activists such as Swedish teenager Greta Thunberg — the vanguard of a youth-action movement — pressured their governments for more.

In recent years, the developing economies of China and India have taken center stage in discussions about climate action. China, which is now the world’s largest carbon emitter, declared several moderate steps in 2021 to reduce emissions, including that it would stop building coal-burning power plants overseas. India announced it would aim for net-zero emissions by 2070, the first time it has set a date for this goal.

Yet such pledges continue to be criticized. At the 2021 U.N. Climate Change Conference in Glasgow, Scotland, India was globally criticized for not committing to a complete phaseout of coal — although the two top emitters, China and the United States, have not themselves committed to phasing out coal. “There is no equity in this,” says Aayushi Awasthy, an energy economist at the University of East Anglia in England.

Facing the future

In many cases, changes are coming faster than scientists had envisioned a few decades ago. The oceans are becoming more acidic as they absorb CO2, harming tiny marine organisms that build protective calcium carbonate shells and are the base of the marine food web. Warmer waters are bleaching coral reefs. Higher temperatures are driving animal and plant species into areas in which they previously did not live, increasing the risk of extinction for many.

No place on the planet is unaffected. In many areas, higher temperatures have led to major droughts, which dry out vegetation and provide additional fuel for wildfires such as those that have devastated Australia, the Mediterranean and western North America in recent years.

Then there’s the Arctic, where temperatures are rising at more than twice the global average and communities are at the forefront of change. Permafrost is thawing, destabilizing buildings, pipelines and roads. Caribou and reindeer herders worry about the increased risk of parasites for the health of their animals. With less sea ice available to buffer the coast from storm erosion, the Inupiat village of Shishmaref, Alaska, risks crumbling into the sea. It will need to move from its sand-barrier island to the mainland.

“We know these changes are happening and that the Titanic is sinking,” says Louise Farquharson, a geomorphologist at the University of Alaska Fairbanks who monitors permafrost and coastal change around Alaska. All around the planet, those who depend on intact ecosystems for their survival face the greatest threat from climate change. And those with the least resources to adapt to climate change are the ones who feel it first.

“We are going to warm,” says Claudia Tebaldi, a climate scientist at Lawrence Berkeley National Laboratory in California. “There is no question about it. The only thing that we can hope to do is to warm a little more slowly.”

That’s one reason why the IPCC report released in 2021 focuses on anticipated levels of global warming. There is a big difference between the planet warming 1.5 degrees versus 2 degrees or 2.5 degrees. Each fraction of a degree of warming increases the risk of extreme events such as heat waves and heavy rains, leading to greater global devastation.

The future rests on how much nations are willing to commit to cutting emissions and whether they will stick to those commitments. It’s a geopolitical balancing act the likes of which the world has never seen.

Science can and must play a role going forward. Improved climate models will illuminate what changes are expected at the regional scale, helping officials prepare. Governments and industry have crucial parts to play as well. They can invest in technologies, such as carbon sequestration, to help decarbonize the economy and shift society toward more renewable sources of energy.

Huge questions remain. Do voters have the will to demand significant energy transitions from their governments? How can business and military leaders play a bigger role in driving climate action? What should be the role of low-carbon energy sources that come with downsides, such as nuclear energy? How can developing nations achieve a better standard of living for their people while not becoming big greenhouse gas emitters? How can we keep the most vulnerable from being disproportionately harmed during extreme events, and incorporate environmental and social justice into our future?

These questions become more pressing each year, as carbon dioxideaccumulates in our atmosphere. The planet is now at higher levels of CO2 than at any time in the last 3 million years.

At the U.N. climate meeting in Glasgow in 2021, diplomats from around the world agreed to work more urgently to shift away from using fossil fuels. They did not, however, adopt targets strict enough to keep the world below a warming of 1.5 degrees.

It’s been well over a century since chemist Svante Arrhenius recognized the consequences of putting extra carbon dioxide into the atmosphere. Yet the world has not pulled together to avoid the most dangerous consequences of climate change.

Time is running out.